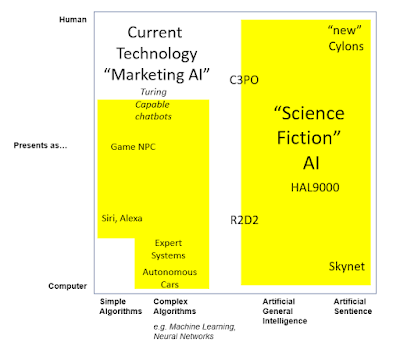

Following on from the

previous post I now want to look at what happens when we try and move out of the "marketing AI" box and towards that big area of "science fiction" AI to the right of the diagram. Moving in this direction we face two major challenges, #2 and #3 of our overall AI challenges:

Challenge #2: Generalism

Probably the biggest "issue" with current "AI" is that it is very narrow. It's a programme to interpret data, or to drive a car, or to play chess, or to act as a carer, or to draw a picture. But almost any human can make a stab at doing all of those, and with a bit of training or learning can get better at them all. This just isn't the case with modern "AI". If we want to get closer to the SF ideal of AI, and also to make it a lot easier to use AI in the world around us, then what we really need is a "general purpose AI" - or what is commonly called Artificial General Intelligence (AGI). There is a lot of research going into AGI at the moment in academic institutions and elsewhere, but it is really early days. A lot of the ground work is just giving the bot what we would call common-sense - just knowing about categories of things, what they do, how to use them - the sort of stuff a kid picks up before they leave kindergarten. In fact one of the strategies being adopted is to try and build a virtual toddler and get it to learn in the same way that a human toddler does.

Whilst the effort involved in creating an AGI will be immense, the rewards are likely to be even greater - as we'd be able to just ask or tell the AI to do something and it would be able to do it, or ask us how to do it, or go away and ask another bot or research it for itself. In some ways we would cease to need to programme the bot.

Just as a trivial example, but one that is close to our heart. If we're building a training simulation and want to have a bunch of non-player characters filling roles then we have to script each one, or create behaviour models and implement agents to then operate within those behaviours. It takes a lot of effort. With an AGI we'd be able to treat those bots as though they were actors (well extras) - we'd just give them the situation and their motivation, give some general direction, shout "action" and then leave them to get on with it.

Not also that moving to an AGI does not imply ANY linkage to the level of humanness. It is probably perfectly possible to have a fully fledged AGI that only has the bare minimum of humanness in order to communicate with us - think R2D2.

Challenge #3: Sentience

If creating an AGI is probably an order of magnitude greater problem than creating "humanness", then creating "sentience" is probably an order of magnitude greater again. Although there are possibly two extremes of view here:

- At one end many believe that we will NEVER create artificial sentience. Even the smartest, most human looking AI will essentially be a zombie, there's be "nobody home", no matter much much it appears to show intelligence, emotion or empathy.

- At the other, some believe that if we create a very human AGI then sentience might almost come with it. In fact just thinking back to the "extras" example above our anthropological instinct almost immediately starts to ask "well what if the extras don't want to do that..."

We also need to be clear about what we (well I) mean when I talk about sentience. This is more than intelligence, and is certainly beyond what almost all (all?) animals show. So it's more than emotion and empathy and intelligence. It's about self-awareness, self-actualisation and having a consistent internal narrative, internal dialogue and self-reflection. It's about being able to think about "me" and who I am, and what I'm doing and why, and then taking actions on that basis - self-determination.

Whilst I'm sure we code a bot that "appears" to do much of that, would that mean we have created sentience - or does sentience have to be an emergent behaviour? We have a tough time pinning down what all this means in humans, so trying to understand what it might mean (and code it, or create the conditions for the AGI to evolve it) is never going to be easy.

So this completes our chart. To move from the "marketing" AI space of automated intelligence to the science-fiction promise of "true" AI, we face three big challenges, each probably an order of magnitude greater than the last:

- Creating something that presents as 100% human across all the domains of "humanness"

- Creating an artificial general intelligence that can apply itself to almost any task

- Creating, or evolving, something that can truly think for itself, have a sense of self, and which shows self-determination and self-actualisation

It'll be an interesting journey!